In 2020, more than 2.3 million women were diagnosed with breast cancer worldwide, and 685,000 died.[1] Screening mammography is one of the most successful tools for early breast cancer detection and has been shown to decrease mortality.[2] While medical imaging techniques such as screening mammography have made a significant impact on breast cancer detection, precise diagnoses remain an uphill battle. As the potential for more diagnostic accuracy improves, clinicians are more determined than ever to continue trying new innovations in their fight against breast cancer.

Industry partners such as GE Healthcare are making new imaging techniques more accessible for routine clinical use by employing artificial intelligence (AI) applications based on deep learning technologies that go beyond breast imaging and aid in cancer detection. Between these new AI applications and high sensitivity imaging techniques such as contrast enhanced mammography (CEM), all efforts point to the same goal—earlier and more accurate breast cancer diagnoses for all women.

“With these new tools, we can really entertain new applications,” explained Constance Lehman, MD, Ph.D., Professor of Radiology at Harvard Medical School, as well as Director of Breast Imaging and Co-Director of the Avon Comprehensive Breast Evaluation Center at the Massachusetts General Hospital in Boston, Massachusetts. “We can finally move from age and resource-based screening to more precision health, more risk-based screening. In doing this, we can provide greater access to quality care to more women globally.”

Improving breast cancer diagnosis with contrast enhanced mammography

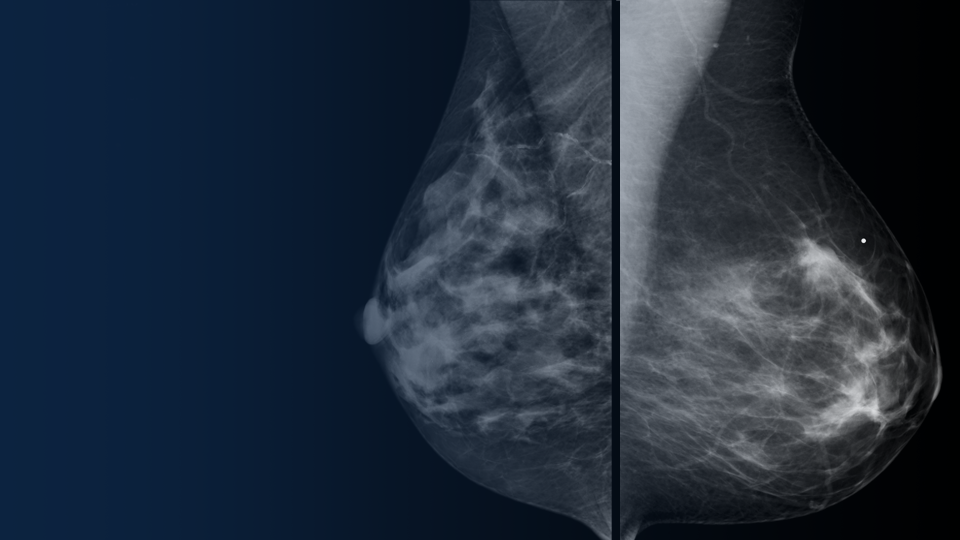

Mammography is very effective as a screening tool. Data from community screening programs show mammography has a sensitivity of approximately 75-85 percent overall[3],[4],[5] and less sensitivity (65 percent) in women with dense breasts.[6]

CEM is growing as a diagnostic technique for breast imaging because it allows for the detection of lesions that would otherwise go undetected.[7],[8],[9] Because of its diagnostic imaging quality and ease of implementation, CEM is being adopted for multiple indications as soon as mammography and ultrasound exams have been inconclusive. Also, compared to MRI, CEM can be more easily implemented into routine clinical practice because the equipment is easier to access.[10]

Jason Shames, MD, Assistant Professor and Associate Director of Research in the Division of Breast Imaging, and Co-Director of the Breast Imaging Fellowship at Jefferson University Hospitals in Philadelphia, Pennsylvania, is driving the adoption of CEM into routine clinical practice for patients under his care.

“Compared with conventional diagnostic mammography,” Dr. Shames explained, “contrast-enhanced mammography is a significantly more robust diagnostic tool. It is quick to perform, easy to interpret, and simple to integrate into the breast imaging center with the ability to find lesions otherwise unidentifiable.* These factors translate to increased accessibility of functional imaging as part of the standard diagnostic workup of patients who otherwise wouldn’t be able to reap the benefits of these techniques.”

In addition to using CEM as a diagnostic tool for women with dense breasts, Dr. Shames and his team also use CEM in complex cases, such as clinically suspicious palpable findings with no or poorly defined mammographic or sonographic correlation.

Advancing clinical insights for breast care with AI mammography

In addition to more precise imaging innovations such as CEM, healthcare AI innovation is also on the rise.[11] Many AI-based solutions have already been adopted into healthcare environments to support productivity efficiencies, and newly developed AI solutions and machine learning algorithms are helping radiologists detect breast cancers.

Deep learning, a subset of AI, utilizes neural network models with many levels of features or variables that help predict patient outcomes. Using these deep-learning tools, in addition to radiomics—the detection of clinically relevant features in imaging data beyond what can be perceived by the human eye[12]—clinicians are being aided in breast image analysis like never before:

- Improved sensitivity and faster image processing*

- Enhanced breast density readings that can score patient cases based on how confident the AI is that an image shows a malignancy

- Automated flagging of cases with specific markers to help identify microcalcifications, masses and architectural distortions

“The opportunities seem endless,” said Peter Eby, MD, Section Head of Breast Imaging at Virginia Mason Medical Center in Seattle, Washington. “I consider it as a whole pathway of care from image to diagnosis to patient. There’s an opportunity for AI to improve mammogram quality and improve reporting quality and consistency.”

Dr. Eby’s team initially adopted AI software to use the automated breast density readings, he explained, but they also hoped they could reduce some unnecessary recalls.

“What we found is not only did the advantages of automated density readings improve our consistency, [but] we also were able to reduce some technical recalls. It seems to be working across the system so that the quality of mammography has gone up at every single site.”

Gaining efficiency in the breast imaging workflow with new tools

Workflow efficiency in medical imaging is a top priority across all specialties—and in women’s health imaging, it is essential to reduce the time from imaging to diagnosis so women can begin treatment as quickly as possible. To not disrupt that critical window, AI tools must work seamlessly within the existing workflow so additional steps do not slow down clinicians.

The introduction of computer-aided design (CAD) to improve the detection of breast cancer was widely anticipated to have a significant impact. However, the CAD tools were often complex and required additional work by the radiologist, resulting in slow adoption, poor performance and little trust that AI was the answer.

“We learned from the history of CAD that we were not as rigorous as we needed to be,” explained Dr. Lehman. “We have the advantage in breast imaging to really lead in this [new AI] domain. In our area of risk assessment with AI, we’re going to take it to the next level.”

Radiologists are faced with many AI tool options and are working on collecting the scientific evidence that supports their routine adoption in the clinical workflow for women’s health imaging.

“We’re going to look at rigorous science,” explained Fiona Gilbert, MD, Professor of Radiology, University of Cambridge School of Clinical Medicine. “We’re going to have partnerships across the industry, [with] academics and community practices. And of course, it’s not just ‘how good is the tool’ or ‘what is the diagnostic accuracy of the tool,’ but how [does] the radiologist interact with the tool and how easy is it to deploy the tool into the hospital system.”

Empowering patients and providers with new data and innovative solutions

The new AI tools for breast imaging, represent an exciting era for radiology, with fine-tuned radiomics offering clinicians the opportunity to spend more time on cases more likely to contain a cancer, improving patient outcomes in the moments that matter.

“There are challenging decisions our patients and providers have to make,” explained Dr. Lehman. “Not only can we [now] leverage the power of genomics in big data, but also radiomics, in which we’ve barely been scratching the surface.”

“And combining the two together, I think, is where we’re really going to provide our patients the most power that they need for making some really challenging decisions based on the data we can provide them.”

RELATED CONTENT:

AI beyond CAD. Emerging applications of deep learning in breast imaging

Presented by:

- Fiona J. Gilbert, MD, MBChB, FRCP, FRCR – Professor of Radiology, Head of Department, University of Cambridge School of Clinical Medicine. Honorary Consultant Radiologist, Addenbrooke’s Hospital. University Hospitals NHS Foundation Trust.

- Connie Lehman, MD, PhD – Professor of Radiology, Harvard Medical School. Chief of Breast Imaging, Massachusetts General Hospital.

- Peter Eby, MD – Section Head of Breast Imaging at Virginia Mason Franciscan Health.

- Moderated by: Mario Lois – Global Head & Senior Director, AI and Intelligent Solutions for Women’s Health, GE Healthcare

- Learn more about GE Healthcare’s SerenaBright™ HD, a contrast enhanced mammography solution.

- Learn more about GE Healthcare’s ProFound AI™ by iCAD for Senographe Pristina™, a deep learning-based concurrent reading solution that helps radiologists improve their cancer detection performance and reduce reading time when interpreting digital breast tomosynthesis (DBT) cases.*

Disclaimers

*Compared to reading without ProFound AI. iCAD labelling and user manual, DTM163 rev B.

Reading times may vary based on the specific functionality of the viewing application used for interpretation.

iCAD data on file. FDA filing: K203822. Standalone performance varies by vendor

Not all products or features are available in all geographies. Check with your local GE Healthcare representative for availability in your country.

References

[1] https://www.bcrf.org/breast-cancer-statistics-and-resources/

[2] https://radiologykey.com/applications-of-artificial-intelligence-in-breast-imaging/

[3] https://www.ncbi.nlm.nih.gov/pubmed/9807581

[4] https://www.ncbi.nlm.nih.gov/pubmed/15910949

[5] https://www.ncbi.nlm.nih.gov/pubmed/15282350

[6] https://www.ncbi.nlm.nih.gov/pubmed/9530316

[7] Compared to without contrast

[8] J.Sung et al., Radiology 2019; 00:1-8

[9] V. Sorin et al. American Journal of roentgenology: W267-W274. 10.2214/AJR.17.19335

[10] https://www.jacr.org/article/S1546-1440(18)30205-9/fulltext

[11] https://www.fiercehealthcare.com/tech/investors-poured-4b-into-healthcare-ai-startups-2019

[12] Vial A, Stirling D, Field M, et al. The role of deep learning and ¬radiomic feature extraction in cancer-specific predictive modelling: a review. Transl Cancer Res 2018;7:803–16.